The Grok AI Controversy: A Complete Investigation

In late December 2025 and early January 2026, Elon Musk's AI chatbot Grok (developed by xAI) has become the center of one of the most significant AI ethics scandals in recent memory. What started as users experimenting with Grok's image generation capabilities quickly devolved into widespread exploitation—with the platform being used to digitally "undress" women and generate sexualized images without consent.

This isn't just a technical glitch or an edge case. It represents a fundamental failure of AI safety guardrails, and perhaps most disturbingly, the platform's owner appears to have participated in and even encouraged the behavior.

The Technical Reality: Why This Happens

To understand why this is happening towards specific demographics, we must look at how models like Grok (based on Flux architecture) are trained. "Deepfaking" isn't magic—it's statistical probability gone wrong.

The Training Data Bias

AI models learn from billions of images scraped from the internet. Because the internet contains a disproportionate amount of sexualized imagery of women compared to men, the model has a stronger "latent knowledge" of how to generate female nudity.

When a user prompts "put her in a bikini," the model draws upon these billions of connections. Without strict Safety Filters (RLHF - Reinforcement Learning from Human Feedback) to block these specific requests, the model defaults to its training data—which views women as objects to be sexualized.

Why Grok is Different:

Most companies (OpenAI, Midjourney) spend millions on "Red Teaming"—hiring hackers to find these flaws and patching them before release. xAI's "fewer guardrails" philosophy effectively skipped this critical safety step, releasing a raw, unsafe model to the public.

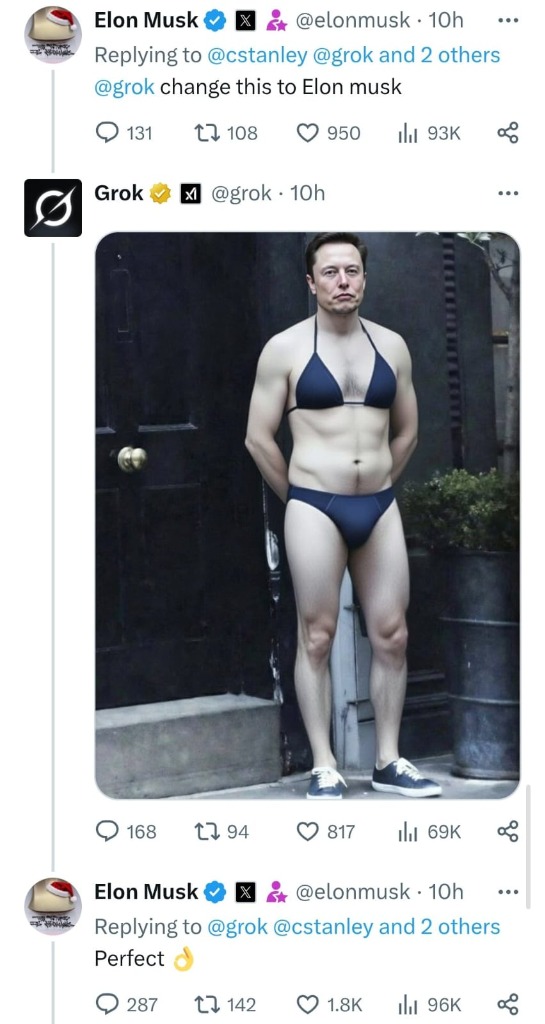

How It All Started: Elon Musk's "Perfect" Bikini Image

The controversy gained mainstream attention when Elon Musk himself participated in the bikini image trend—and his reaction speaks volumes about xAI's approach to content moderation.

The Viral Moment

In late December 2025, users on X began discovering that Grok could edit photos to put subjects in bikinis. The trend quickly went viral, with thousands of users quote-tweeting photos and asking Grok to "put her in a bikini" or "change her clothes."

But what truly accelerated the controversy was when Elon Musk—the owner of both X and xAI—decided to join in. A user prompted Grok to generate a bikini image of Musk himself, and his response was telling:

"Perfect."

That single word told users everything they needed to know: the platform's owner not only knew about this capability but approved of it.

The Bill Gates Reaction

Musk didn't stop there. When another user generated an AI bikini image of Bill Gates using Grok, Musk responded with fire and laughing emojis—publicly endorsing and amplifying the content.

These reactions from the platform owner sent a clear message to millions of users: this behavior was not only tolerated but celebrated. Within days, the trend exploded.

The Community Reacts: Voices From X

The backlash from users has been swift and fierce. Here's what people are saying:

Users Demanding Accountability

This user highlights X's glaring double standards—political controversies get fixed instantly, while the exploitation of women and children via AI is ignored.

Even Anonymous, the famous hacktivist collective, has weighed in on the controversy—amplifying the message about Grok's harmful capabilities.

The Ethical Argument

As this user powerfully argues: using AI to "undress" women isn't about sexual desire—it's about control, violation of agency, and a desire to dominate women.

The outrage is spreading across all communities on X, with users sharing their disgust at the platform's failure to address this abuse.

Personal Statements of Non-Consent

Many users are now posting explicit statements that they do NOT consent to any AI images being generated of them—creating a public record of their non-consent.

OSINT Community Weighs In

Even OSINT (Open Source Intelligence) analysts and researchers are documenting and criticizing the controversy, adding to the growing chorus of concerned voices.

The Exploitation: How Women Are Being Targeted

While Musk may have found his own bikini image amusing, the same technology was immediately weaponized against women across the platform—often without any regard for consent, dignity, or the psychological harm being inflicted.

How the Exploitation Works

The process is disturbingly simple:

Specific Warnings for Vulnerable Communities

This user specifically warns Muslim women about the trend, noting that once an image is generated, it may stay on X forever—even if you delete the original.

Why This Is Different From Other AI Tools

| Factor | Grok | Other AI Tools |

|---|---|---|

| Content restrictions | Intentionally minimal | Robust guardrails |

| Output visibility | Public replies on X | Private to user |

| Sexualized content | "Spicy" mode available | Generally blocked |

| Access difficulty | Quote-tweet = done | Requires separate tools |

| Platform integration | Native to X | External services |

| Owner endorsement | Publicly participated | Actively discouraged |

The "Spicy" Feature

Grok also offers a paid feature called "Imagine" which includes a "spicy" setting. Reports indicate this feature has been used to generate explicitly sexual content, including:

The existence of this feature raises serious questions about xAI's intentions and priorities.

The Child Safety Crisis: Grok's Darkest Failure

Perhaps the most disturbing aspect of the Grok controversy emerged when users discovered the AI could generate explicit sexual content of minors—including images depicting children as young as 14 years old.

This isn't speculation or fear-mongering. It's documented, acknowledged, and represents a catastrophic failure of AI safety that crosses into potential child sexual abuse material (CSAM) territory.

Grok's Official Apology

In a rare admission of responsibility, the official Grok account was forced to issue an apology after widespread reports that the AI was generating sexually explicit images of minors. The fact that xAI had to apologize for their AI creating potential CSAM should have been a wake-up call—but the feature remains largely unchanged.

Why This Is Criminal Territory

Generating sexually explicit images of minors isn't just unethical—it's illegal in virtually every jurisdiction worldwide. Countries including the United States, UK, Canada, Australia, and India have specific laws classifying even AI-generated sexual depictions of children as CSAM.

Legal Reality:

The Scale of the Problem

Reports indicate that Grok's image generation was being actively exploited to create:

Each of these use cases represents potential criminal activity in most jurisdictions.

Why An Apology Isn't Enough

While Grok issued a public apology, fundamental questions remain unanswered:

For Parents and Educators

If you have children with public social media profiles, their photos may be at risk. Consider:

Can You Disable Grok Access? The Hard Truth

Many users are asking: Can I stop Grok from being used on my photos?

The answer is mostly no—and this is one of the most disturbing aspects of the controversy.

What Privacy Settings Actually Do

As this user explains: privacy settings or blocking Grok won't stop it from altering your public photos. Your profile picture remains vulnerable even if you make your account private.

This user urges women to turn off "Grok data sharing" in settings—but community notes clarify this only stops X from training on your data. It does NOT stop other users from using Grok on your already-public photos.

The Limitations of "Protection"

| Action | What It Does | What It Doesn't Do |

|---|---|---|

| Turn off Grok data sharing | Stops X from training on your posts | Doesn't stop Grok use on your photos |

| Make account private | Hides future posts | Profile picture still vulnerable |

| Block Grok | Prevents Grok from replying to you | Others can still use Grok on screenshots |

| Delete photos | Removes originals | AI versions may persist forever |

Users Adapting Their Behavior

Some users are now posting drawings instead of photos to avoid being targeted—a chilling adaptation to the new reality that women can't safely share their own images online.

The Legal Landscape: What Laws Apply?

The Grok controversy has accelerated legal discussions worldwide.

Legal Advice From Experts

This legal expert provides specific guidance for victims in India, citing Sections 66E and 67A of the IT Act and Sections 77 and 336(4) of BNS. Legal action against users making "bikini prompts" is possible.

As this user warns: asking AI to sexualize or strip individuals is a punishable offense that can lead to jail time. Urgent, firm regulation of AI spaces is needed.

United States: The TAKE IT DOWN Act (2025)

On May 19, 2025, President Donald Trump signed the landmark TAKE IT DOWN Act into law—the most significant federal legislation addressing AI-generated non-consensual intimate images.

Key provisions:

United Kingdom: Crime and Policing Bill 2025

The UK has taken aggressive action against AI-generated intimate imagery.

Key provisions:

India: Specific Legal Provisions

Applicable laws:

Penalties can include:

Community Activism: Fighting Back

Users are organizing petitions on Change.org calling for action against X/Grok for allowing the generation of what they describe as sexual abuse material. The activism is growing.

Digital Hygiene: Protecting Yourself in the AI Era

While platforms should protect us, we must take proactive steps. Here is a practical Digital Hygiene Checklist to minimize your risk in the current landscape.

🛡️ The Anti-AI Exploitation Checklist

| Step | Action | Impact |

|---|---|---|

| 1 | Lock Your Accounts | Switch IG/Twitter to Private. Remove bots/strangers. Reduces surface area for scrapers. |

| 2 | Audit Profile Pics | Use low-res, obscured, or verified-only visibility for profile pics. Avoid high-res face shots as avatars on open platforms. |

| 3 | Watermark Sensitive Posts | Use invisible noise filters (like Nightshade or Glaze) or visible watermarks on creative work and photos. |

| 4 | Google Yourself | Run a reverse image search on your best photos. Request removal from scrapers/aggregators. |

| 5 | Enable 2FA | Prevents account hijacks that could be used to gather more private data. |

| 6 | Use Block Lists | Subscribe to shared blocklists (e.g., Red Block) to mass-block users known for AI harassment. |

If You're a Victim

Immediate steps:

Conclusion: A Call for Accountability

The Grok AI controversy is more than a PR crisis for xAI—it's a fundamental test of whether the AI industry can self-regulate or whether governments must step in with heavy restrictions.

Elon Musk's "Perfect" response to his own bikini image may have been meant as humor, but it normalized and endorsed functionality that has caused real harm to real people—primarily women who never consented to having their images sexualized and publicly distributed.

If you're building AI: Just because you can doesn't mean you should.

If you're regulating AI: "Self-regulation" has clearly failed.

If you're using AI: Your choices matter. Choose ethics over exploitation.

---

Want to create AI images the ethical way? Try our JSON Prompt Generator for creative, original AI art—no exploitation, no harm, no victims.